Coverage

Structural MRI, functional MRI, diffusion MRI, EEG, and multimodal integration tasks.

NeuroClaw

NeuroClaw

NeuroBench

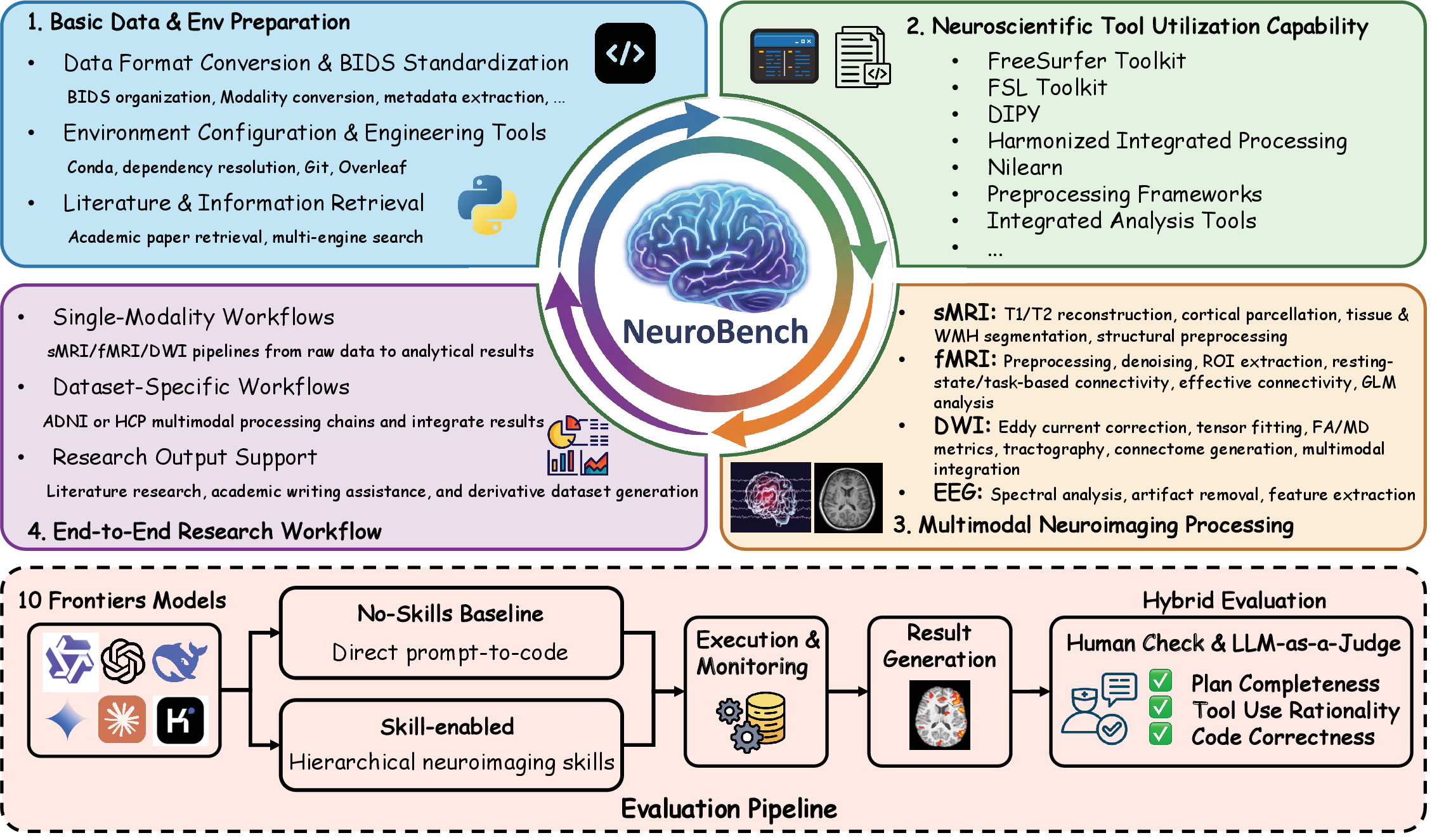

NeuroBench is the benchmark suite used to evaluate end-to-end neuroimaging workflows, reproducibility readiness, and skill-guided execution.

Structural MRI, functional MRI, diffusion MRI, EEG, and multimodal integration tasks.

Planning quality, tool/skill reasonableness, code/command correctness, and reproducibility readiness.

Each task directory contains a task.md instruction file with explicit inputs, outputs, and checks.

Benchmark Runs

NeuroBench supports baseline and skill-enabled runs. You can execute it from the Web UI or from the CLI batch runner.

with-skills: use skills loaded from skills/.no-skills: run without skills for baseline comparison.--benchmark-compare-skills: run paired variants for the same tasks.output/.# Web benchmark mode

python core/agent/main.py --web --benchmark

# CLI benchmark batch runner

python core/agent/main.py --benchmark

# Paired skill comparison

python core/agent/main.py --benchmark --benchmark-compare-skills

Use --score-benchmark to score existing reports in output/ with a GPT-5.4 weighted rubric.

python core/agent/main.py --score-benchmark

python core/agent/main.py --score-benchmark --score-workers 8Run tasks first, then score the generated reports to analyze quality and efficiency.